As seasonal temperatures drop in late autumn and the days shorten in the lead-up to the solstice, animals such as squirrels, mice and bats enter a state of hibernation. Hibernation itself is thought to be triggered by reduced temperatures and declining light levels....

As seasonal temperatures drop in late autumn and the days shorten in the lead-up to the solstice, animals such as squirrels, mice and bats enter a state of hibernation. Hibernation itself is thought to be triggered by reduced temperatures and declining light levels....

Scientific Advances Made Aboard The International Space Station I was captivated by the recent news about the two astronauts stranded on the ISS due to their Boeing Starliner being deemed unfit to return them to Earth. Their initial 8-day stay has now been extended to...

Scientific Advances Made Aboard The International Space Station I was captivated by the recent news about the two astronauts stranded on the ISS due to their Boeing Starliner being deemed unfit to return them to Earth. Their initial 8-day stay has now been extended to...

How And Why Leaves Turn Red And Yellow In The Autumn With the 22nd of September marking the Autumn Equinox, we know the season is now upon us. But this is more than just a date; it’s driven by solar orbits and our relative positioning to and distance from the sun....

How And Why Leaves Turn Red And Yellow In The Autumn With the 22nd of September marking the Autumn Equinox, we know the season is now upon us. But this is more than just a date; it’s driven by solar orbits and our relative positioning to and distance from the sun....

Our Changing Weather As the UK grapples with the impact of climate change, one noticeable effect is the shifting patterns of our weather. Historically known for its temperate climate with distinct seasons, the UK is now experiencing increasingly unpredictable weather,...

Our Changing Weather As the UK grapples with the impact of climate change, one noticeable effect is the shifting patterns of our weather. Historically known for its temperate climate with distinct seasons, the UK is now experiencing increasingly unpredictable weather,...

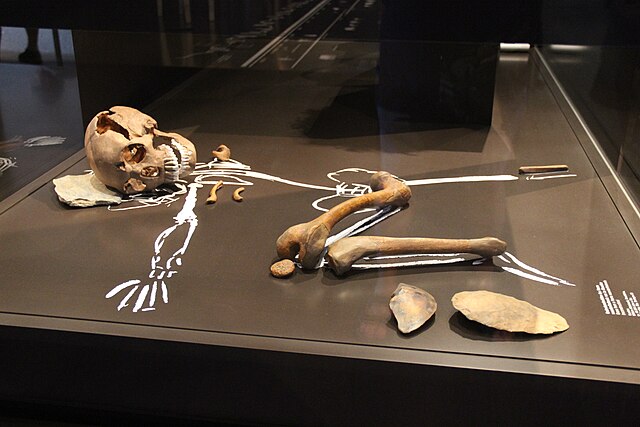

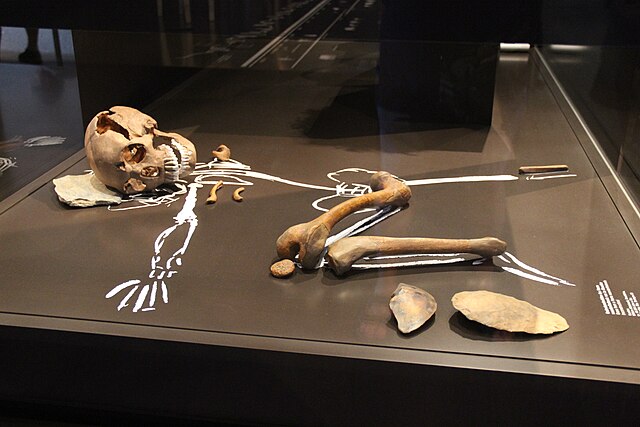

Planet Of The Apes… Or Not? The hugely successful, fascinating movie franchise Planet of the Apes charts a human (Homo sapiens) apocalypse and subsequent rise to prominence of apes (Hominidae). But if humans were to die out, would apes really become the dominant...

Planet Of The Apes… Or Not? The hugely successful, fascinating movie franchise Planet of the Apes charts a human (Homo sapiens) apocalypse and subsequent rise to prominence of apes (Hominidae). But if humans were to die out, would apes really become the dominant...

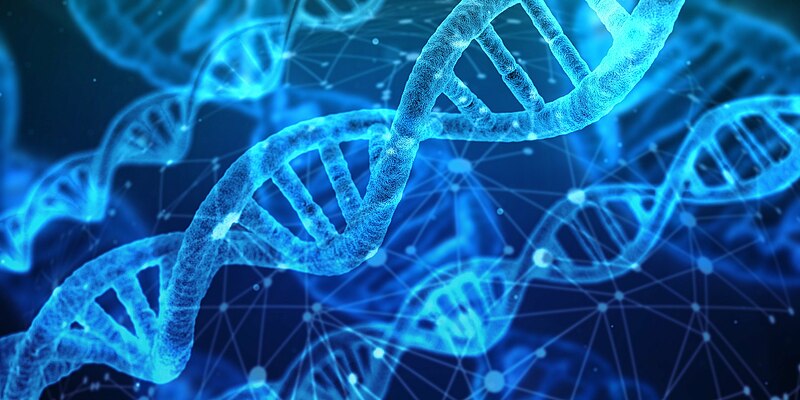

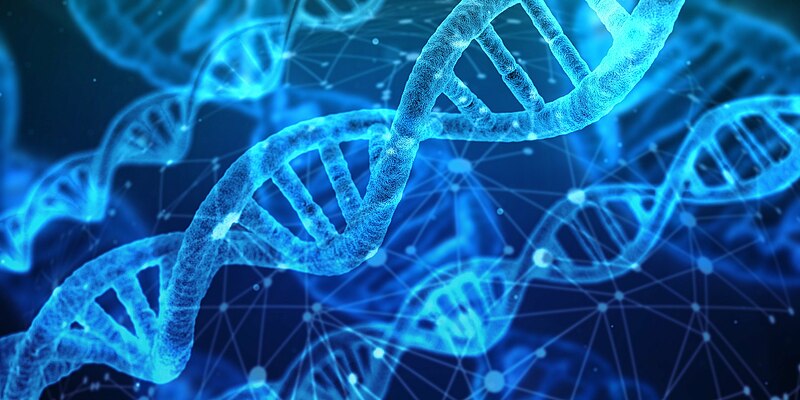

Deoxyribonucleic acid (DNA) is one of the most important molecules in all living things; it contains a unique sequence of information needed for life. There are in fact over 3 billion sequences in the human genome which are responsible for our genetic makeup. Each DNA...

Deoxyribonucleic acid (DNA) is one of the most important molecules in all living things; it contains a unique sequence of information needed for life. There are in fact over 3 billion sequences in the human genome which are responsible for our genetic makeup. Each DNA...