Oxford Home Schooling and Tuition The Flexible way to learn Home Courses Key Stage 3 Courses English Geography History PSHE Maths Science Spanish GCSE and IGCSE Courses Biology Business Chemistry Economics English Language English Literature French Geography German...

Oxford Home Schooling and Tuition The Flexible way to learn Home Courses Key Stage 3 Courses English Geography History PSHE Maths Science Spanish GCSE and IGCSE Courses Biology Business Chemistry Economics English Language English Literature French Geography German...

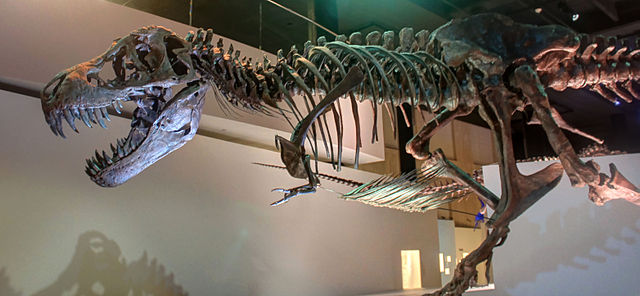

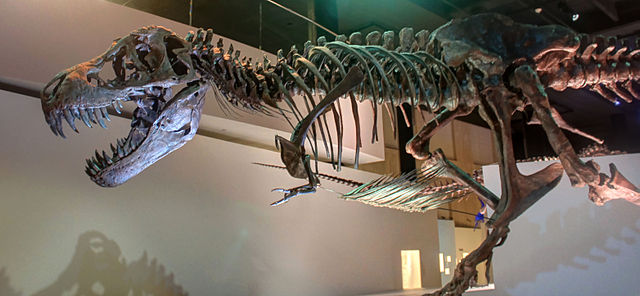

Dinosaurs Discovered On Our Shores When we think of dinosaurs, American accents in films might make it easy to forget that these prehistoric creatures roamed all over the world. Fossils of dinosaurs have been found everywhere, including England, primarily in our...

Dinosaurs Discovered On Our Shores When we think of dinosaurs, American accents in films might make it easy to forget that these prehistoric creatures roamed all over the world. Fossils of dinosaurs have been found everywhere, including England, primarily in our...

We all have our ways of doing things. It’s one of the many things that makes each of us unique. How we do anything is how we do everything and is often how we can stand out from a crowd. If we all did things the same way, the world would be an incredibly boring place....

We all have our ways of doing things. It’s one of the many things that makes each of us unique. How we do anything is how we do everything and is often how we can stand out from a crowd. If we all did things the same way, the world would be an incredibly boring place....

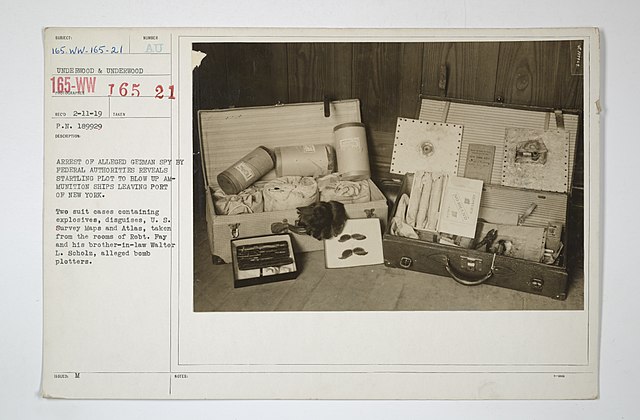

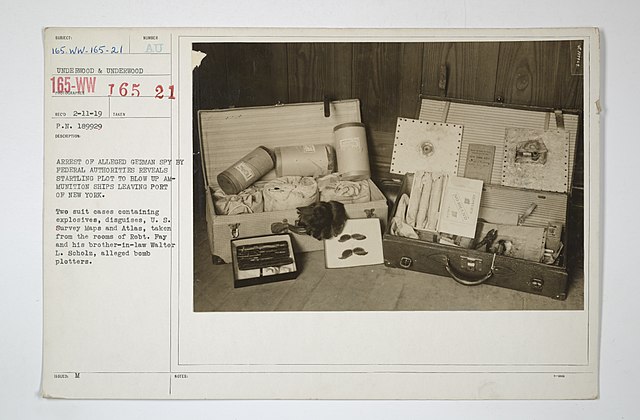

During World War II, both the Allies and Axis Powers carried out covert operations to disrupt enemy operations and gather intelligence in order to gain the upper hand. Many countries set up their own agencies or resistance movements to recruit and train spies. Here...

During World War II, both the Allies and Axis Powers carried out covert operations to disrupt enemy operations and gather intelligence in order to gain the upper hand. Many countries set up their own agencies or resistance movements to recruit and train spies. Here...

Studying is no longer just about retaining information. It’s also about staying safe online. There are new laws being implemented to keep children safe on the internet. However, the first lines of defence should always be your own precautions. There can be a lot of...

Studying is no longer just about retaining information. It’s also about staying safe online. There are new laws being implemented to keep children safe on the internet. However, the first lines of defence should always be your own precautions. There can be a lot of...

As seasonal temperatures drop in late autumn and the days shorten in the lead-up to the solstice, animals such as squirrels, mice and bats enter a state of hibernation. Hibernation itself is thought to be triggered by reduced temperatures and declining light levels....

As seasonal temperatures drop in late autumn and the days shorten in the lead-up to the solstice, animals such as squirrels, mice and bats enter a state of hibernation. Hibernation itself is thought to be triggered by reduced temperatures and declining light levels....